Cliff Notes#

Condensed Cheatsheet/mnemonics of stuff I forget

Overview: Map of Mathematics#

- Algebra: abstraction of numbers

- Group Theory: abstraction of symmetry

- Ring Theory: abstraction of arithmetic

- Graph Theory: abstraction of relationships

- Category Theory: abstraction of composition

Linear Algebra#

Differential Geometry#

- Inner Product: Angle

- Norm: Length

- Metric: Distance

- Measure: Volume/Size

- \(L_p\) norm:

max()Vector Component -

\(L_0\) norm: Counting Norm

-

Gradient: Derivative of scalar field

- Divergence: Sink vs Source aka volume density of outward flux

- Curl: Rotation Rate around point

- Laplacian: Difference average of neighborhood at a point - value at point

- Jacobian: Gradient of vector field

- describes skew/rotation/distortion of differential patch around \(f(\vec p)\)

-

analogue of 1st order Taylor polynomial i.e. best linear approximation rate of change

\(f(\vec{p} + \varepsilon \vec{h})\approx f(\vec{p} )+\mathbf{J}_{f} (\vec{p})\cdot \varepsilon \vec{h}\) -

Laplace Equation: Maximal smoothness/mean curvature is zero

- intuitive as equilibrium steady-state state e.g. diffuse heat flow

- intuitive as surface has no bumps or local minimas

- intuition from CMU Discrete Differential Geometry Course: Lecture 18

- Poisson Equation: Generalization of Laplace Equation

-

intuitive as soap film (pde solution) covering a wire (boundary condition)

-

Manifold: fancy name of a curved space

- Reimannian manifold: manifold with geodesic metric (Reimannian metric)

-

Functionals: Functions that take functions as inputs (derivative/integral operators)

-

Spaces:

Banach Space⊇Hilbert Space⊇Sobolev Space - Banach Space: norm+completeness

- Hilbert Space: inner-product norm

-

Sobolev Space: nice derivatives up to order S

-

Group: closed under multiplication, commutative, identity function, inverse

- Lie Group: curved space with a group structure i.e. a group that is a manifold where multiplication is smooth/infinitely differentiable

- Lie Algebra: tangent space of Lie group

- Tangent space: linear approximation of a curved space

- Non-abelian group: non-commutative group i.e. \(a*b \neq b*a\) (e.g. SO(3) rotation group)

- Dual number: convenient for computation of Lie algebra

Equations#

Spectral Theory#

Legendre polynomial#

The nth Legendre polynomial, \(\boldsymbol{L}_{n}\), is orthogonal to every polynomial with degrees less than n i.e.

- \(\boldsymbol{L}_{n} \perp \boldsymbol{P}_{i}, \ \forall i\in [0..n-1]\)

- ex: \(\boldsymbol{L}_{n} \perp x^{3}\)

\(\boldsymbol{L}_{n}\) has n real roots and they are all \(\in [-1,1]\)

Harmonic functions => \(\Delta u(x) = 0\)

Homogenous function => \(f : \mathbb{R}^{n} \to \mathbb{R}^{n}, \ f(\lambda \mathbf{v})=\lambda^{k} f(\mathbf{v})\) where \(k,\lambda \in \mathbb{R}\)

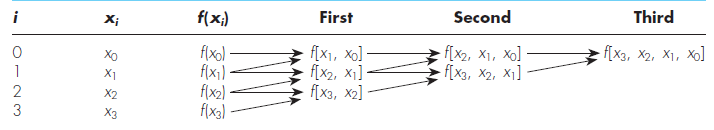

General form of Newton's divided-difference polynomial interpolation:

Lagrange interpolating polynomial scheme is just a reformulation of Newton scheme that avoids computation of divided differences

Gaussian quadrature#

- allows for accurately approximating functions where \(f(x) \in P_{2n-1}\) with only n coefficients

Approximation Schemes#

- Regression Schemes: (Linear or nonlinear)

- Curves do not necessarily go through sample points so error at said points might be large

- Round-off error becomes pronounced for higher order versions and ill-conditioned matrices are a problem

- Orthogonal polynomials do not necessarily suffer from this

- Interpolation Schemes: (splines, lagrangian/newtonian, etc)

- Curves must go through sample points so error at said points is small

- Not ill conditioned

Thin plate splines#

- construction is based on choosing a function that minimizes an integral that represents the bending energy of a surface

- the idea of thin-plate splines is to choose a function f(x) that exactly interpolates the datapoints (xi,yi), say,yi=f(xi), and that minimizes the bending energy

\(E[f]=\int_{\mathbf{R}^{n}}\left|D^{2} f\right|^{2} d X\) - Can also choose function that doesn't exactly interpolate all control points by using smoothing parameter for regularization

\(E[f]=\sum_{i=1}^{m}\left|f\left(\mathbf{x}_{i}\right)-y_{i}\right|^{2}+\lambda \int_{\mathbb{R}^{n}}\left|D^{2} f\right|^{2} d X\)

Spherical Basis Splines#

- Gross reduction summary: b-splines with slerp instead of lerp between control points

RBF#

- Integration By RBF Over The Sphere

- RBF for Scientific computing

- Interpolation and Best Approximation for Spherical Radial Basis Function Networks

- Spherical Radial Basis Functions, Theory and Applications (Springer Briefs in Mathematics)

- Transport schemes on a sphere using radial basis functions

- On choosing a radial basis function and a shape parameter when solving a convective PDE on a sphere

- A Fast Algorithm For Spherical Basis approximation

Spherical Splines#

- Spline Representations of Functions on a Sphere for Geopotential Modeling

- Fitting scattered data on sphere-like surfaces using spherical splines

- Bernstein-Bézier polynomials on spheres and sphere-like surfaces

- Survey on Spherical Spline Approximation

- scattered data fitting on the sphere: scattered data fitting on the sphere